Human Operator

A human augmentation tool that allows an AI to control your body with EMS, helping you learn new physical skills. Winner of MIT HARD MODE.

Project Details

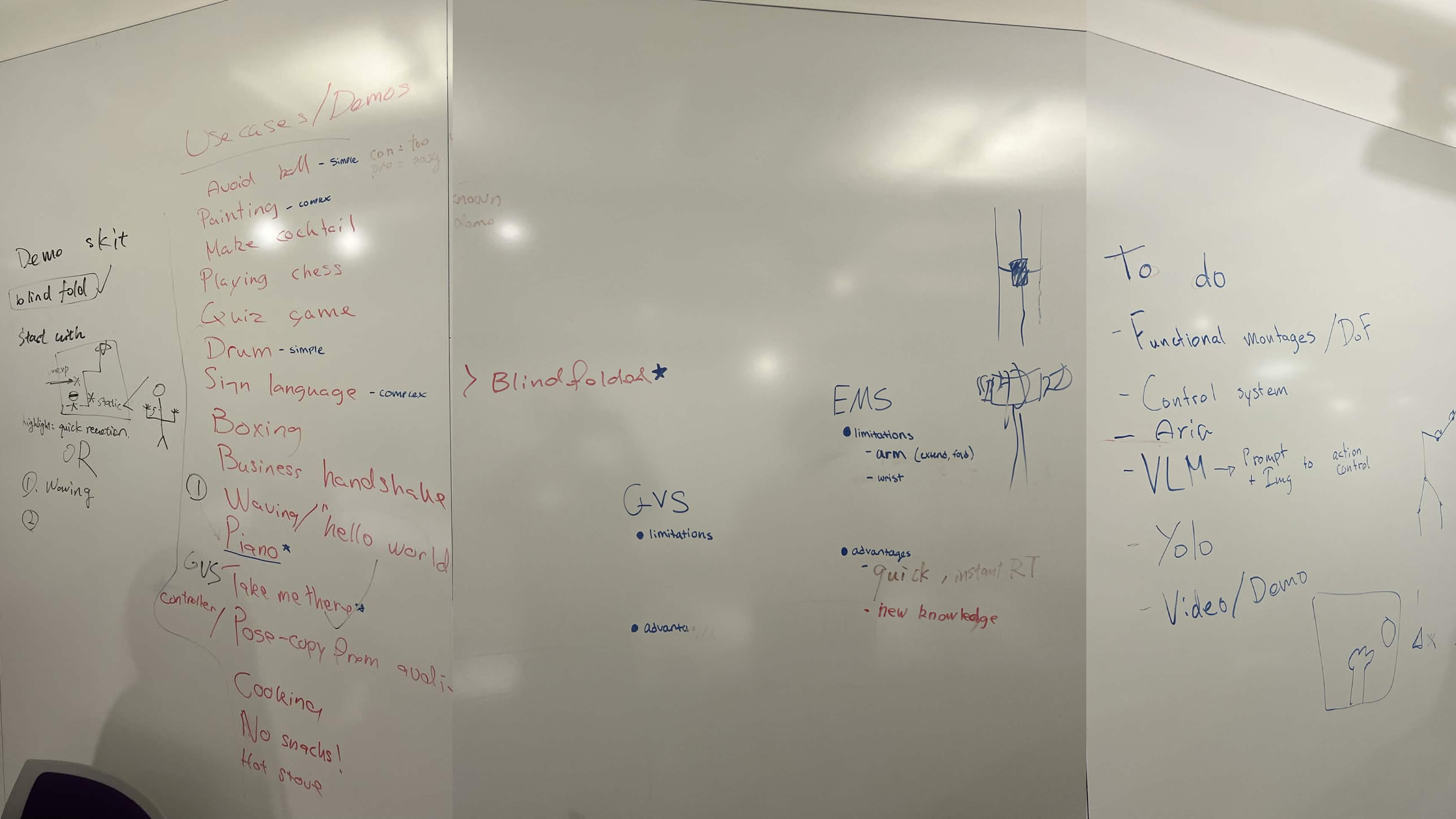

I showed up to the Media Lab for the 48 hour hackathon (MIT HARD MODE) knowing absolutely nobody and found a team of five others who I had never met before, but we shared common interests. We didn't entertain normal ideas because building another tool that just spits out chat bubbles or generated art bored us, and this was HARD MODE. We wanted to do something completely unhinged: see if we could hack humanity itself into a direct hardware peripheral for an algorithm. Wiring up raw nervous systems to decision support systems over the weekend was chaotic, but feeling a machine successfully hijack your joints to execute physical actions in the real world was a truly rewarding experience.

Inspiration

The inspiration came from a simple question: what if learning a new physical skill felt less like a struggle and more like a guided experience? We're surrounded by AI that can process information and generate complex plans, but the output is usually text or images. What if the output was direct physical assistance? We were fascinated by the idea of using AI to close the loop between seeing, understanding, and acting, using the human body itself as the output device. This led us to explore Electrical Muscle Stimulation (EMS) not as a therapeutic tool, but as a medium for instruction and augmentation.

Overview

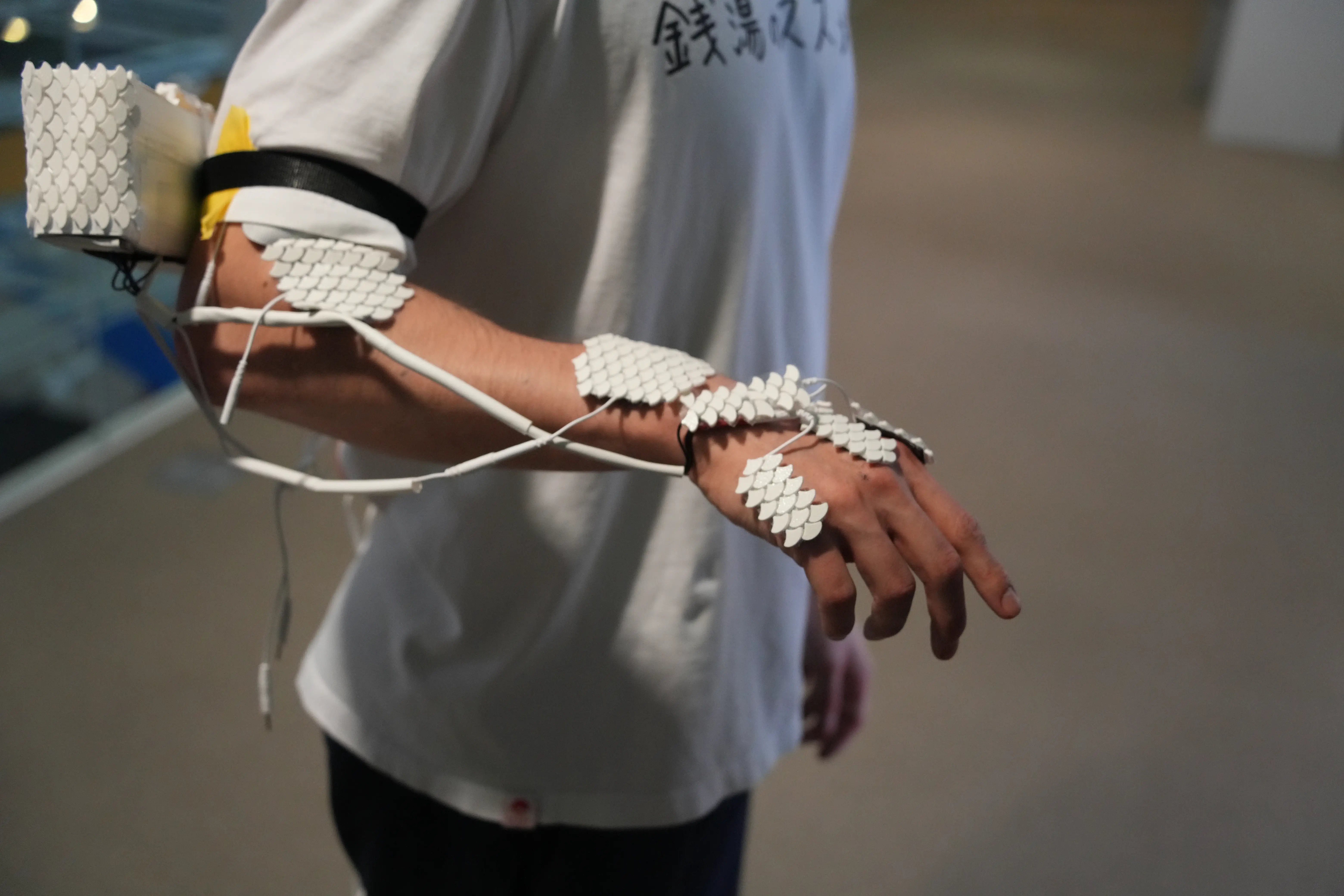

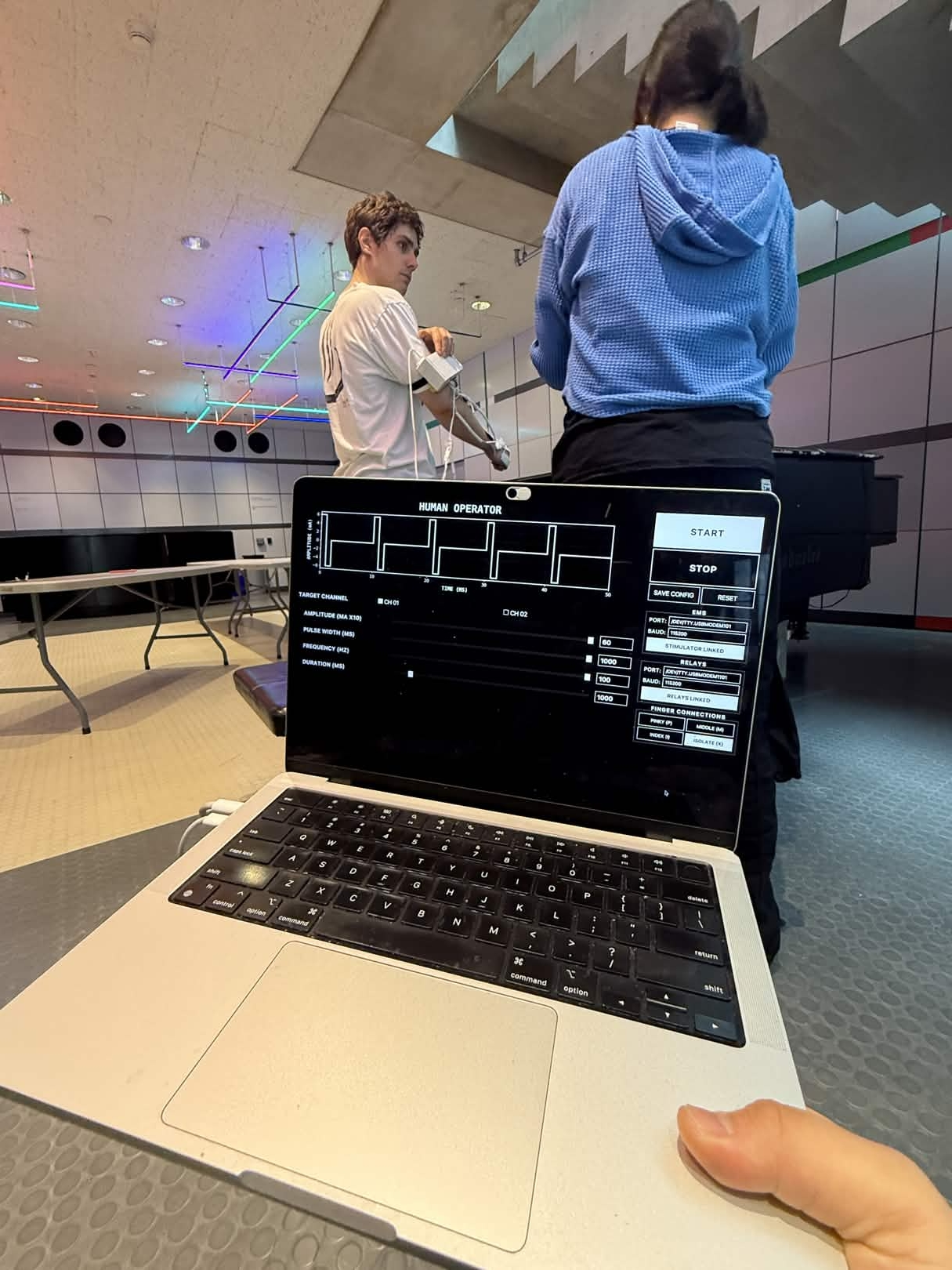

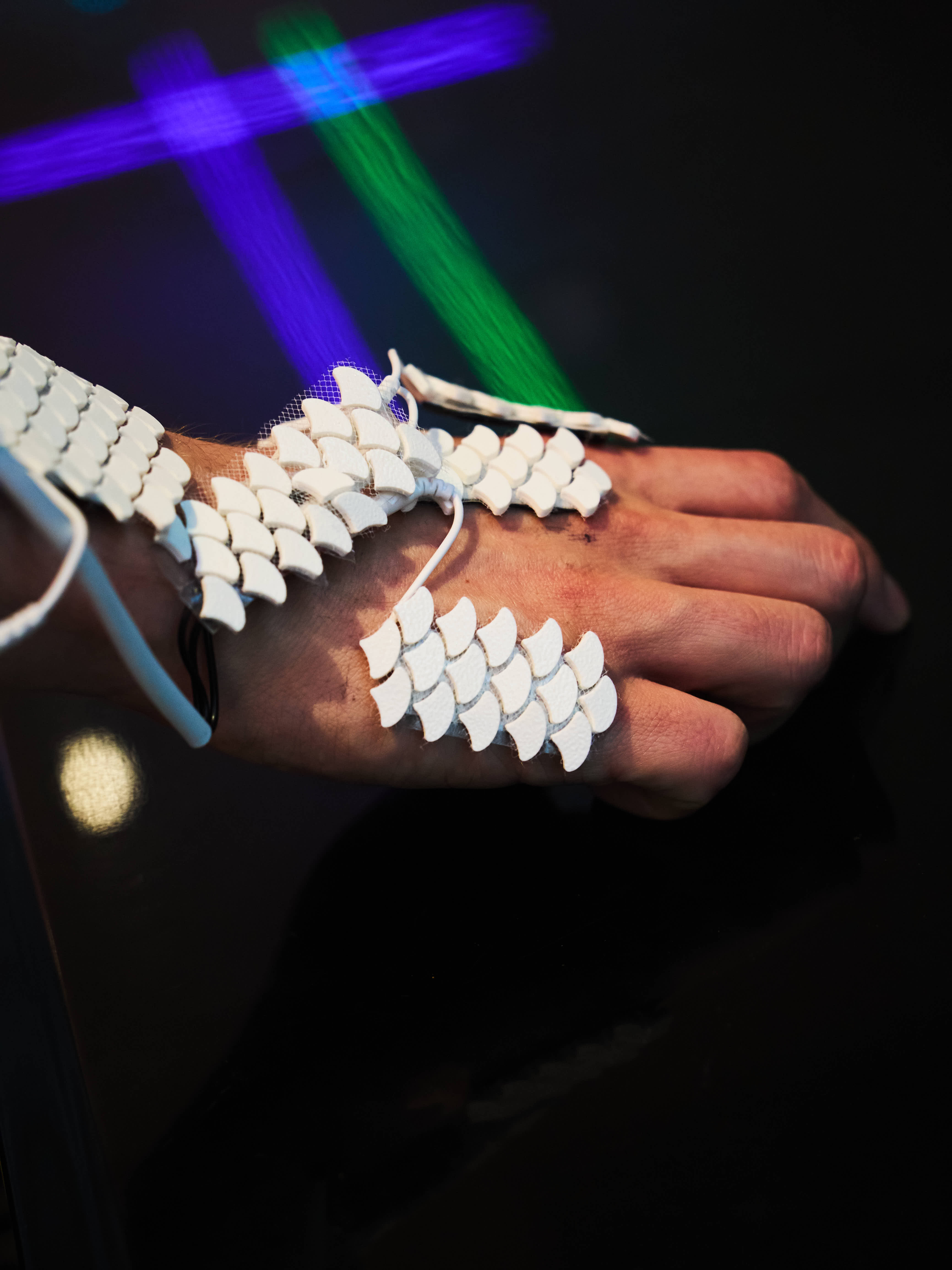

Human Operator is a system that allows an AI to guide your hand and finger movements using EMS. You wear a camera that provides a first-person point of view to a Vision-Language Model (VLM). When you give a voice command like "Hey Operator, play the melody," the AI analyzes the video feed (e.g., seeing a piano), formulates a plan, and sends signals to an Arduino-controlled EMS unit strapped to your arm. The EMS unit then stimulates the appropriate muscles to make your fingers press the keys in sequence. The goal is to create a seamless feedback loop where AI perception guides physical action in real-time.

|

|

|

|

Left to right:

AI stimulates wrist to say 'Hello' back • AI stimulates fingers in sequence to play melody • AI stimulates fingers to form 'OK' sign

System Architecture

The project is a demonstration of real-time human-computer integration, connecting a powerful cloud-based AI to local hardware for precise physical control.

- Sensing: A camera captures the user's first-person perspective, streaming video to the main application. Voice commands are captured to trigger the AI.

- Reasoning: The video stream and voice command are sent to a Vision-Language Model (in our case, Anthropic's Claude API). The VLM interprets the scene and the user's intent to generate a sequence of motor commands (e.g., "press index finger," "lift middle finger").

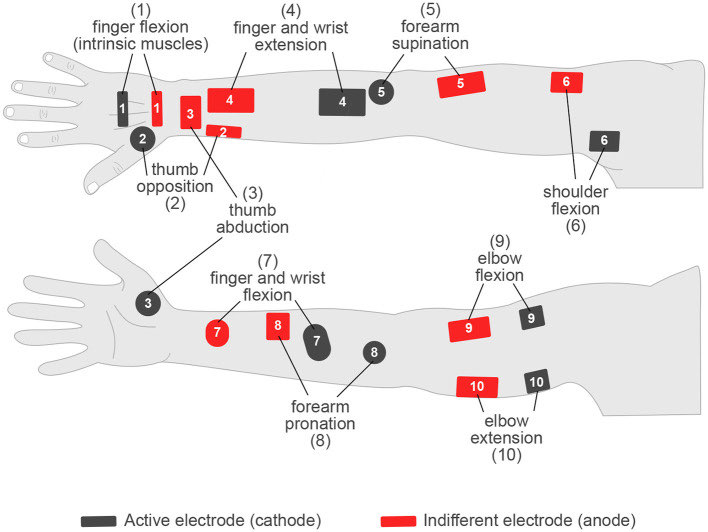

- Actuation: These commands are sent to a Flask server running locally, which communicates with an Arduino microcontroller via serial. The Arduino controls a set of relays connected to a TENS/EMS unit. The relays direct electrical pulses to gel electrodes placed on the user's arm, stimulating the muscles that control finger and wrist movements.

The entire loop, from voice command to muscle actuation, is designed to be fast enough to feel interactive and responsive.

Map showing anode and cathode placements for targeted muscle actuation.

Map showing anode and cathode placements for targeted muscle actuation.

Discussion

Human Operator is a step towards a future of "on-body intelligence," where AI doesn't just give you information, but physically assists you in executing tasks. The experience of having your own hand move under AI control is both strange and powerful. It opens up possibilities for accelerated skill learning, remote assistance, and new forms of artistic expression.

During the MIT Hard Mode hackathon, we demonstrated the system's ability to teach simple piano melodies, perform sign language gestures, and even complete fine motor tasks. The project was recognized as the winner of the "Learn" track, highlighting its potential in education and skill acquisition.

Of course, this technology also raises important ethical questions about agency, control, and the boundary between human and machine. Our work is intended as a provocation, a tangible prototype to spark discussion about what it means to collaborate with AI on a deeply physical level.

This project was a team effort, and I'm grateful to have worked with Peter He, Ashley Neall, Valdemar Danry, Daniel Kaijzer, and Yutong Wu.